Our Investment in Reality Defender: Protecting Businesses from the Global Deepfake Explosion

Illuminate Financial is proud to partner with Reality Defender, expanding the Series A to $33M to combat the global threat posed by deepfakes. This round includes new investors, Booz Allen Ventures, IBM Ventures, the Jefferies Family Office, Accenture, Gaingels, along with follow-on investments from DCVC, Y-Combinator, and the Partnership Fund for New York City.

Reality Defender is a real-time deepfake detection platform that enables enterprises to counter sophisticated threats from synthetically altered images, videos, audio, and documents. The technology is trusted by leading financial institutions, media companies, and government agencies to safeguard their organizations and customers.

History: Deepfakes are breaking the link between identity and ownership

Deepfakes are the creation of highly realistic content of people across audio, images, and videos, using AI and machine learning that is indistinguishable to the human eyes or ears. While this is a relatively recent concern, notable forms of deepfakes emerged in 2017, such as a video of the former President, Barrack Obama, lip-synced to an audio clip. “Faceswapping” is another form of deepfake that gained popularity, which involved swapping the faces of famous actors and actresses into scenes they never appeared in, creating fabricated videos.

However, it was resource intensive to create deepfake videos — it took multiple days of total training time on an NVIDIA GTX 1080 TI GPU (the highest-tier consumer-grade video card at the time) built on a dataset of 10,000+ images of a celebrity extracted from video frames, usually Youtube. This worked well for celebrities where there was an abundance of content online and enough examples of facial angles to create a deepfake.

Generating individual deepfake images from a trained model was slow. It took about 18 minutes to create 1 minute of video, the quality was poor and easily detectable by humans. Early deepfakes had another major limitation, as each one required a unique model for every pair of people, meaning the process was inefficient and resulted in only a small number of low-quality deepfakes on the internet.

Synthesia, founded in 2017, was one of the early pioneers using computer vision to create somewhat realistic digital humans. Their platform was used for dubbing over text in training videos for learning & development. In 2020, this gained popularity driven by the global shift to remote work.

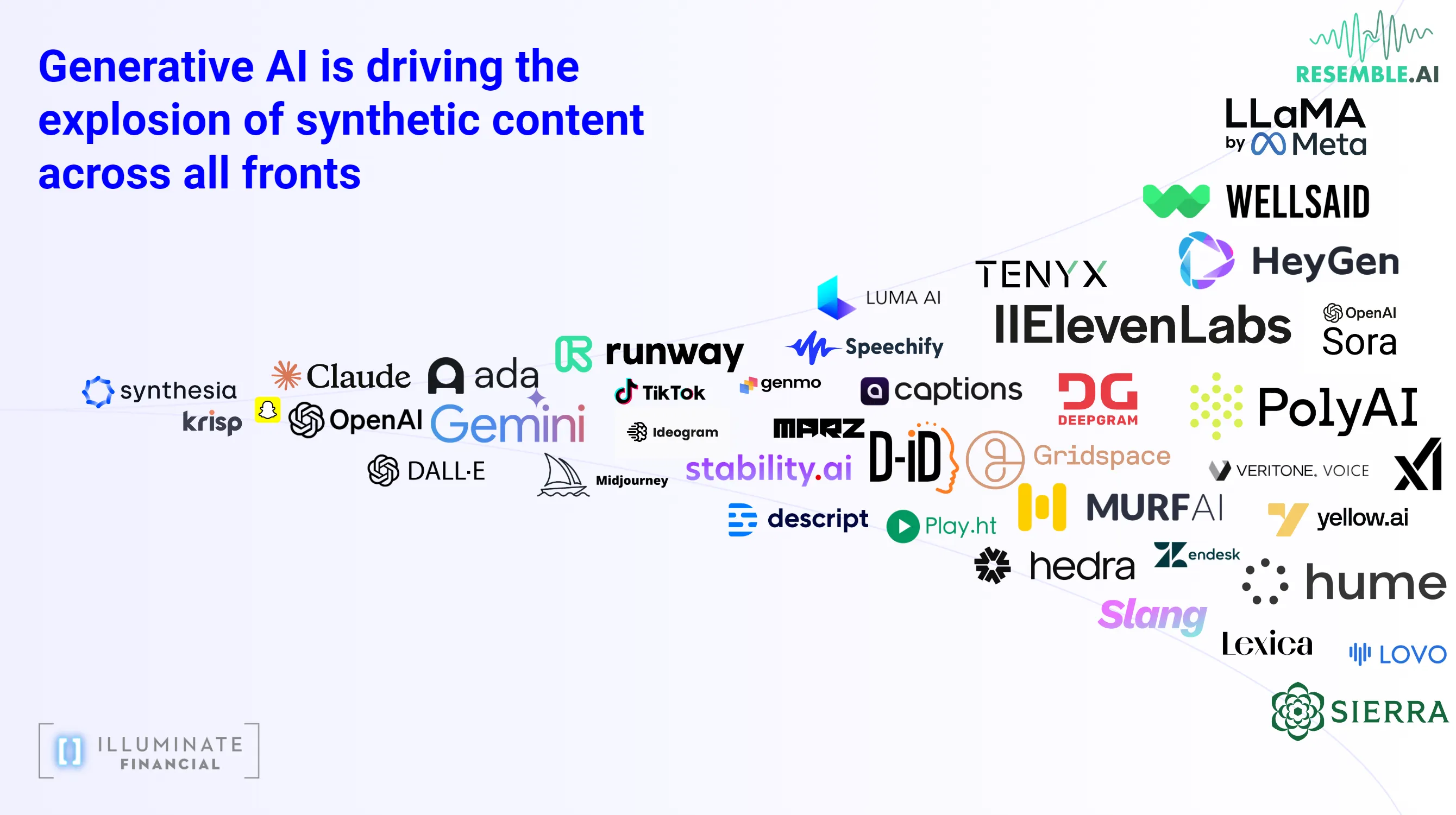

Pandora’s Box has been opened: Deepfakes + Generative AI = Explosion of Deepfakes at Scale

Significant Decline in Cost and Accessibility

Generative AI has revolutionized the creation of deepfakes at scale: it has dramatically 1) reduced cost (from dollars to cents), 2) reduced time (from multiple days of training to minutes or seconds), and 3) increased quality. For example, it costs as little as a $5 subscription to Elevenlabs, and anyone can create a deepfake audio of any person they know. It takes 5 minutes to go on their website and create a voice clone.

As a result, institutions that have implemented “my voice is my password” are particularly susceptible to deepfake attacks.

Deepfakes are a Global Threat

Deepfakes are recognized as a national and a global threat, with agencies like the NSA, FBI, CISA (Cybersecurity and Infrastructure Agency), DARPA and DHS raising concerns:

“Threats from synthetic media, such as deepfakes, present a growing challenge for all users of modern technology and communications, including National Security Systems (NSS), the Department of Defense (DoD), the Defense Industrial Base (DIB), and national critical infrastructure owners and operators.” — NSA, FBI, CISA

According to Onfido, the number of deepfakes has grown by 3000% from 2022 to 2023, and we expect this number to climb higher in 2024 and beyond. In addition, the sophistication and orchestration of cyberattacks leveraging deepfakes are also advancing. Through speaking with cybersecurity experts, we were alarmed at the complexity and creativity of specialized adversaries that leveraged deepfakes to carry out these attacks.

The deepfake headlines we see in the news are merely the tip of the iceberg. Enterprises face a much larger and more diverse range of deepfake attacks daily. Deepfakes are only getting more sophisticated and will be increasingly difficult to detect and combat.

Reality Defender protects communication channels for companies handling critical transactions and data

Reality Defender is a cutting-edge platform built to detect advanced synthetic media attacks in real-time.

Reality Defender uses AI to combat AI, leveraging an ensemble of sophisticated AI models to detect deepfakes in multiple modalities — images, audio, video and text.

One of Reality Defender’s key use cases is its integration in highly-sensitive and highly-consequential environments such as call centers, where it monitors millions of calls in real-time to detect voice-based deepfakes at the beginning of customer engagements. The platform is also deployed in video conferencing platforms, where it prevents impersonation attacks during sensitive meetings with key stakeholders. Additionally, Reality Defender is being used as a powerful forensic tool, allowing agencies and enterprises to analyze previous deepfakes and deepfakes in the wild.

As we worked closely with the Reality Defender team and tested their product, it became clear that they are emerging as a frontrunner in the deepfake detection space. Their efforts have already earned them significant recognition — in 2024, Reality Defender Won “The Most Innovative Startup” at the RSA Conference Innovation Sandbox.

Deepfake Attacks Around the World

Financial Services

The stakes are particularly high for financial services. If businesses fail to protect their clients from deepfake attacks the damage goes beyond one transaction and leads to irreplaceable, long-term loss of trust from existing and future clients.

In early 2024, the Chief Financial Officer of a British engineering firm was deepfaked in a US$25 million scam (200 million HKD). A worker unknowingly sent the money to attackers after attending a video call with multiple people “that looked and sounded just like colleagues he recognized”. Notably, no internal systems were compromised during this attack.

Executives, C-Suite, and High Net Worth individuals are especially vulnerable to deepfake attacks. Private banking clients, for instance, have been targeted by attackers impersonating them via phone calls to transfer money out of their accounts. These high profile individuals tend to have content or media that live on Youtube, Google, or news sites which provide attackers with ample data to create convincing deepfakes. In addition, it is important to secure not just executives but direct lines under each key person or individual with wiring authority.

The CEO of WPP (nearly ~$15 billion in annual revenue) was targeted by deepfake attackers attempting to steal money using the combination of a voice clone and Youtube videos to set up a video call with the CEO’s executives.

Read warned his colleagues that WPP had “seen increasing sophistication in the cyber attacks on our colleagues, and those targeted at senior leaders in particular”.

Source: Financial Times

Government and Media

Social media serves as a catalyst to spread the wildfire for disinformation and deepfakes. Facebook, Tiktok, Instagram, X, private and unmanaged chat groups in Whatsapp and Telegram are fertile ground for the distribution of deepfakes.

In 2023, during the Slovak parliamentary elections, a “leaked” audio clip two days before the vote, contained a candidate claiming to have rigged the election. The recordings spread across Facebook, Instagram, X, Youtube, and Telegram. Given the strategically planned attack during 48 hour moratorium, it was difficult to contain this “deepfake wildfire”.

Deepfakes are widespread and can impact anyone

Taylor Swift’s deepfakes recently went viral on social media, being used for fake adult content, but also used for malicious political manipulation.

In the Summer of 2024, South Korea’s President issued an order to “root out” deepfake attackers, which filled the headlines. Telegram groups in South Korea were spreading inappropriate deepfakes of classmates, teachers, and military colleagues, many of whom were minors— there was even a chat group with over 220,000 members.

Reality Defender team — highly specialized and deeply passionate

Reality Defender is led by a powerhouse team: Ben Colman (CEO, Co-founder), Ali Shahriyari (CTO, Co-founder), and Gaurav Bharaj (Distinguished AI Scientist, Co-founder).

From our first meeting with the team, we were impressed by their unique strengths — they’re a highly complementary combination of domain expertise, commercialization muscle, and technical prowess.

Ben was previously at Goldman Sachs, running initiatives in cybersecurity and blockchain tech. Ali, previously at Originate, has helped Fortune 500 companies build complex software systems used by millions of users globally. He was also the first hire at the AI Foundation working with the CTO of OpenWeb. Gaurav, Harvard PhD and Graduate Teaching Fellow wrote the patent on deepfake detection in 2019, “Identification of neural-network-generated fake images” with the CTO of Synthesia, Matthias Nießner. Gaurav continues to publish cutting-edge research in deepfake detection techniques.

Most importantly, they have built a world-class team of specialized and deeply passionate AI and software engineers and researchers, who have continued to impress us as we’ve worked together.

Looking ahead

The Reality Defender team works tirelessly, around the clock and around the world, to help protect people and businesses from the staggering threat of deepfakes.

We at Illuminate believe AI-based cyber attacks will continue to increase in both sophistication and scale, making this an inevitable arms race. As attackers continue to refine their tech, enterprises will face an ever-growing challenge to keep up.

We are thrilled to partner with Reality Defender. As Illuminate continues to invest in the transformative possibilities of “AI for good”, we are also excited to support cybersecurity companies safeguarding the world from the evolving advances in “AI for bad”.

If you would like to connect with the Reality Defender team, connect on the subject or if you are building a next-generation enterprise technology solution and believe we could help feel free to reach out to Peter Hung ph@illuminatefinancial.com.